My cousin and I got into it the other night. One of those conversations that starts with “okay but what about China though” and ends with you four tabs deep into Norway’s oil fund at 2 AM on a Tuesday. But it stuck with me, because what we were really arguing about wasn’t China. It was a question neither of us said out loud:

When AI reshapes the global economy over the next decade, which of these systems is actually built to make sure ordinary people benefit?

Most of us are walking around with a completely oversimplified view of how governments actually work. Democracy good, communism bad, end of story. But that binary doesn’t survive contact with the data. And it definitely doesn’t tell you anything useful about who’s prepared for what’s coming.

So here’s what I actually found.

Why Norway, America, and China?

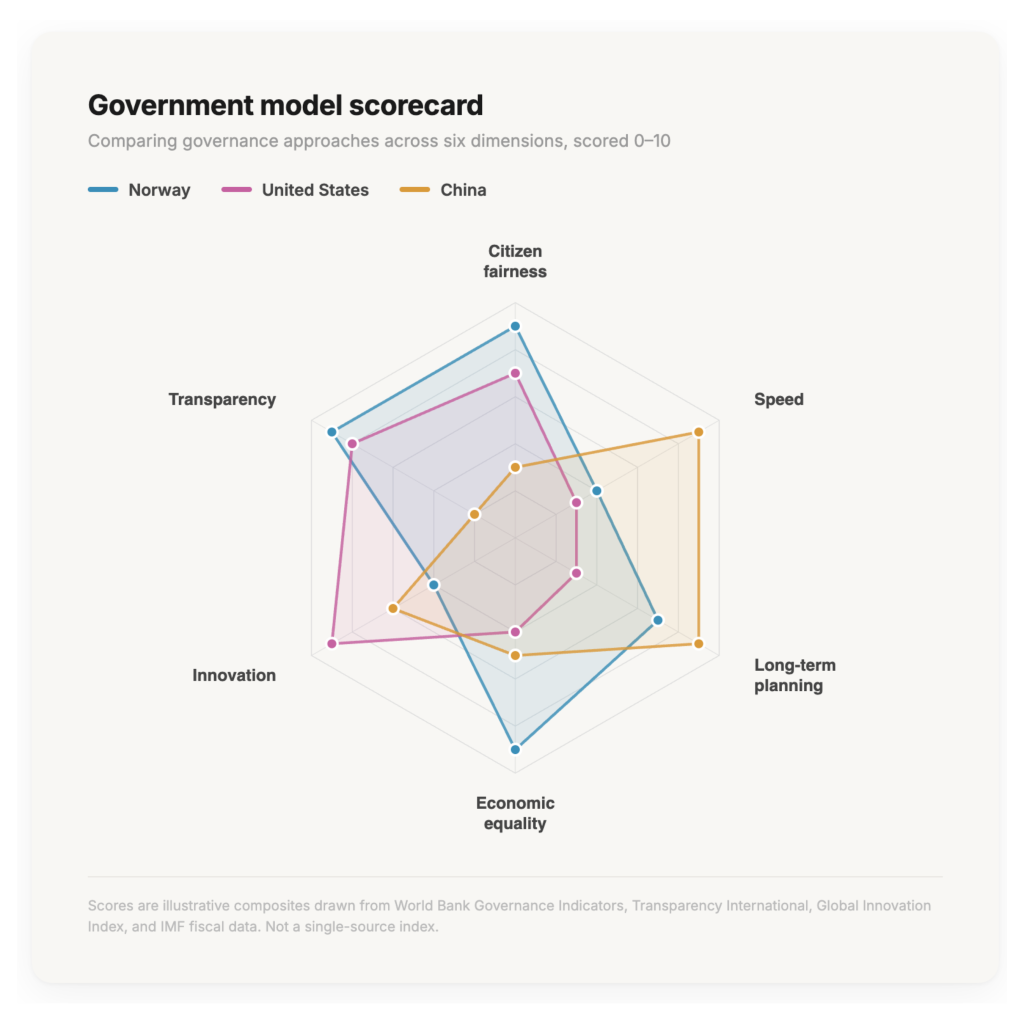

I picked these three because they represent fundamentally different philosophies about who should hold power and what to do with money. Norway is a small Nordic democracy sitting on a staggering reservoir of oil wealth. America is the innovation engine of the world running on capitalism and chaos. China is a one-party state that dragged a billion people out of poverty at a pace the world hadn’t seen before.

They’re all “working.” But they’re working in completely different ways, for completely different reasons. And I think understanding that changes how you think about politics entirely.

Norway: the country that got rich right

Norway struck oil in the North Sea in the late ’60s. And if you know anything about what usually happens when countries find natural resources — corruption, inequality, leaders pocketing everything — you’d expect the same story. Economists call it: the “resource curse.”

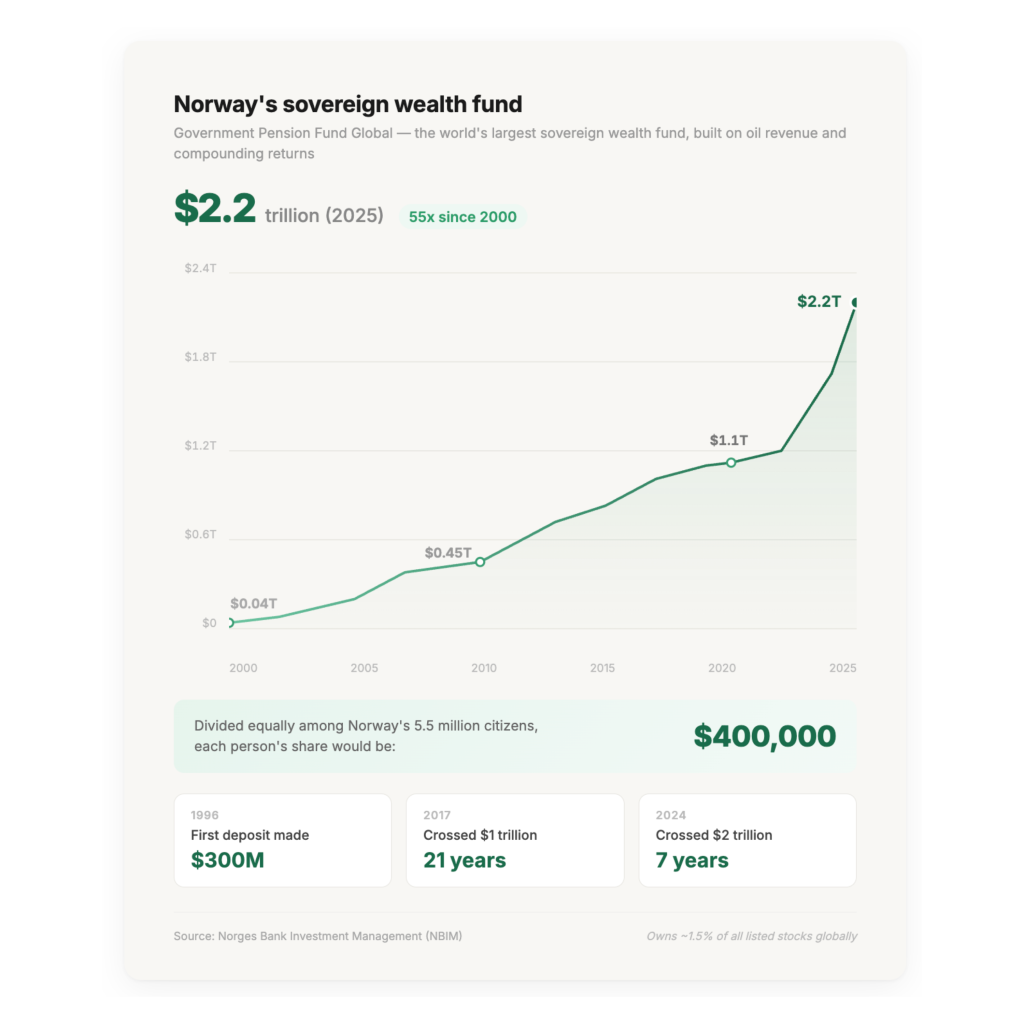

So, Norway said no thanks. They created a sovereign wealth fund, funneled their oil money into it, and now that fund is worth over $2.2 trillion [5]. For 5.5 million people. That’s roughly $400,000 per citizen sitting in what is essentially a national savings account.

There’s a fiscal rule capping government spending at about 3% of the fund’s value per year. [6] So the political debates in Norway aren’t “should we share the wealth,” they’re are about how to best spend the 3% on welfare, climate, infrastructure. That’s a fundamentally different starting point than what we have in America.

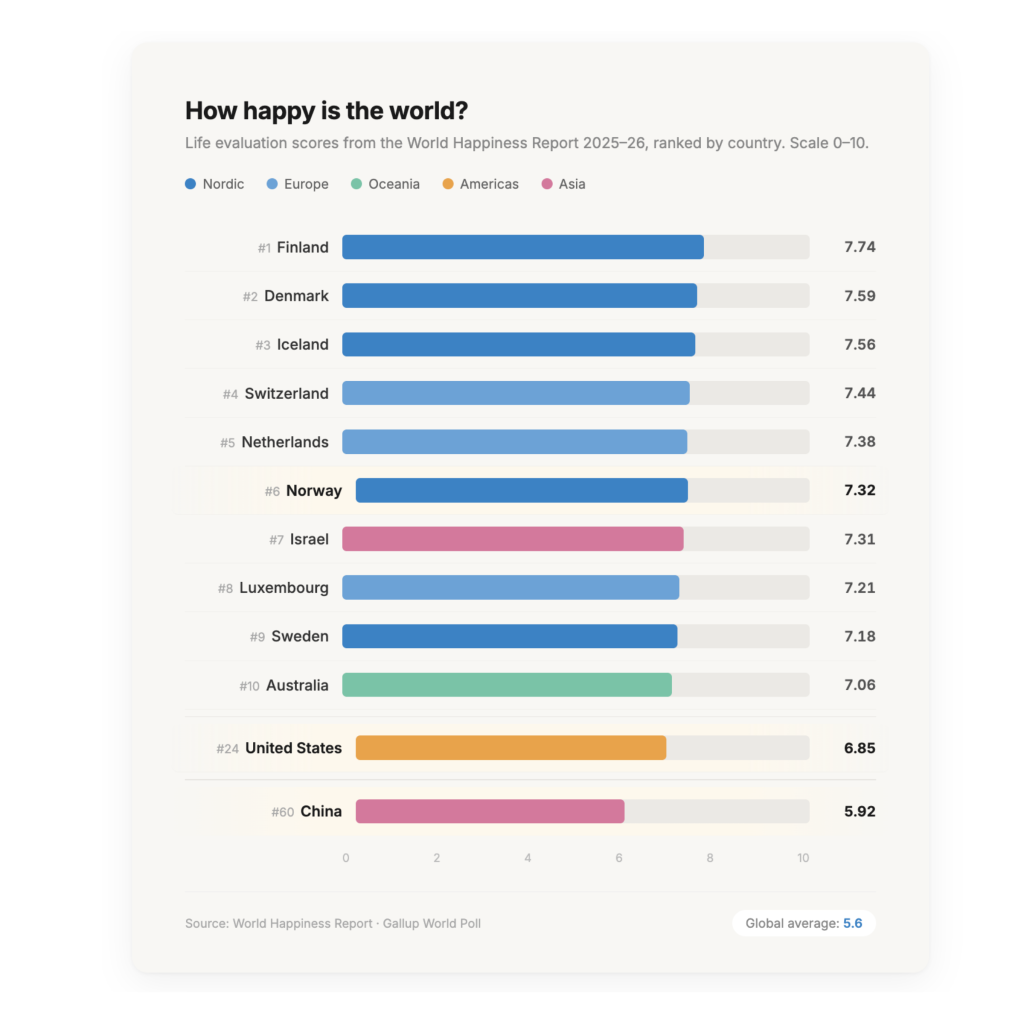

The results speak for themselves. Norway ranks 6th in the world on the Happiness Index with a score of 7.32 out of 10 [1]. America, the largest economy in the history of the world, can’t crack the top 20 (6.85, mid-20s). This tracks with Dacher Keltner’s research at UC Berkeley [4]: there’s a baseline of income where more money stops correlating with more happiness once fundamental needs are met. After that, what matters is community, meaning, autonomy. Norway figured this out at a national policy level. We’re still pretending the number only goes up.

I’m not saying Norway is perfect. Their system is slow. Consensus-based decision-making means everything takes forever. And their model was built around a single natural resource; they don’t yet have an answer for how to handle Big Tech or AI concentration of power, which are structurally different beasts. But the foundation they built? High happiness, low corruption, generational wealth that actually belongs to everyone. It’s hard to argue with.

America: incredible engine, broken chassis

I have complicated feelings about this country right now. A lot of us do.

America’s fundamental operating principle is: let capital grow, let companies get massive, then try to regulate after the fact. We did it with railroads, with oil, with banks, and now with AI. There’s a reason nearly every dominant AI and platform company on Earth is American. The entrepreneurial energy, the willingness to take risks, the sheer scale of what gets built here — no other country comes close.

But the tradeoffs are severe. As of late 2024, the top 10% of American households held 67% of the nation’s total wealth, while the bottom 50% held just 2.5%. The institutions designed to protect people on the wrong side of that gap are being systematically removed. The Consumer Financial Protection Bureau [7] which had returned $21 billion to defrauded consumers since the 2008 financial crisis, was effectively shut down in early 2025, with nearly 70 consumer protection guidance documents revoked in a single announcement. Elon Musk posted “CFPB RIP” on X, which is also launching its own digital payments service that the bureau would have regulated. Inspectors general [8], the independent watchdogs designed to keep government accountable across federal agencies, were mass-fired via email and replaced with loyalists. The through-line isn’t ideology. It’s the quiet, methodical removal of anything that could say no.

On AI specifically, the approach has been “build first, figure out rules later.” The House actually passed a 10-year moratorium on state-level AI regulation as part of the “One Big Beautiful Bill,” a sweeping ban that would’ve preempted over 1,000 pending state AI bills across the country. [9] It got killed in the Senate 99-1, which is one of the few recent votes that actually restored a little of my faith in the system. [10] But the fact that it passed the House at all tells you where the default instinct is: don’t regulate, don’t slow down, let the companies figure it out.

Meanwhile, the wealth those companies generate stays overwhelmingly private. We don’t have a Norway-style public fund. We have lobbying. We have a tax system that billionaires navigate around while the rest of us pay full price. We have the most expensive healthcare system in the world and somehow still leaves people bankrupt.

The US system produces extraordinary highs and unconscionable lows, and we’ve normalized that asymmetry. I don’t think it has to be this way.

China: uncomfortably effective (and a warning)

Okay this is the part my cousin and I went back and forth on the most. Because as Americans, our instinct is to write off China’s system entirely. One-party state, no free speech, authoritarian control — obviously bad, right?

But if you put the ideology aside for a second and just look at execution, it’s hard not to be unsettled by how effective it is. When the CCP decides something is happening, it happens. They built 40,000+ kilometers of high-speed rail in about 15 years. When they decided Alibaba and Tencent were getting too powerful, they launched a sweeping crackdown practically overnight — record fines, new antitrust laws, executives getting called in for “guidance.” [11] No congressional hearings that go nowhere. No ten-year court battles. Just…this is what we’re doing now.

China’s GDP per capita is about $13,100 today — compared to Norway’s $92,600 and America’s $85,200 [3]. But that number was closer to $1,000 just 25 years ago. No country of that size has ever moved that fast.

And that’s the argument my cousin kept making. For a country at China’s stage of development, still industrializing, still urbanizing, still pulling hundreds of millions into the middle class, having a single authority that can move fast without gridlock has genuine advantages. China’s version of state capitalism allows for maximum efficiency because there’s one decision-maker and no one gets to say no.

But that efficiency is also the biggest risk. Bad decisions scale just as fast as good ones. The tech crackdown chilled innovation across the platform and fintech sectors. Investors fled. There’s no independent court system to push back. The Party is the check and the balance, and when the Party gets it wrong, there’s no corrective mechanism besides the Party deciding to correct itself.

If you’ve watched Arcane on Netflix, Viktor’s Glorious Evolution captures this tension viscerally: merge people into a controlled collective for their own good, optimize away all the inefficiency and suffering through top-down control. And what does that utopia actually look like? Sterile. Lonely. Morally catastrophic. When you strip individual agency from the equation — when one entity decides what’s best for everyone and there’s no mechanism to dissent — you end up with something that looks nothing like flourishing, no matter how “optimized” it is.

China’s happiness score of 5.92 [2] is above the global average, but for a country with that much economic momentum, it tells you growth alone doesn’t make people feel good about their lives. Freedom, transparency, and having a say in your own future matter too.

The thesis: AI cannot be allowed to cannibalize the human desire to be seen and heard

AI is going to be the oil of this century. Not in the energy sense, but in the “whoever controls this resource reshapes everything” sense. The superprofits are already concentrating. A handful of companies are sitting on capabilities that are going to automate enormous chunks of the economy, and right now America’s plan for handling that is… the same plan we always have. Let it ride, fight about it later.

I think that’s a mistake. And I think Norway already showed us a better template.

The model: an AI fund

Imagine something like an “AI fund,” modeled after Norway’s oil fund, where a portion of AI-driven superprofits gets earmarked for the public. Not to kill innovation, but to make sure the gains don’t just pool at the top while everyone else scrambles. Use those dividends for reskilling, for community investment, for generous benefits that keep people attached to the labor force as the nature of work shifts underneath them.

I’m not a policy expert and I’m not going to pretend I know how to navigate US budget politics, lobbying, and tax law to make that happen federally. Maybe the more realistic version starts at the city or state level, where California’s legislature has already advanced several AI bills with advocates explicitly calling for worker protection frameworks before the gains concentrate further. [12] Perhaps it starts with the private AI companies themselves. But the principle is sound: we did this before with oil, and one country got it spectacularly right.

The real risk: gradual disempowerment

There’s a concept called “gradual disempowerment,” formalized by David Duvenaud, an ex-Anthropic researcher now at the University of Toronto, that captures the stakes better than most sci-fi doomsday scenarios. [13] His argument is that you don’t need a rogue AI takeover for things to go badly. Right now, our systems are aligned with human interests largely because they need us. What happens when they don’t?

That’s why I pay attention when companies like Anthropic talk openly about concentration of power and disempowerment risks. [14] Dario Amodei’s essay Machines of Loving Grace lays out a genuinely optimistic vision for AI, but it doesn’t pretend the hard questions about who benefits and who gets left behind will answer themselves. [15] That kind of honesty from inside the industry matters. And it needs to be said that Anthropic is far from a clean actor here. They settled a major copyright lawsuit for $1.5 billion in 2025, [16] and like every major LLM provider, they trained on Wikipedia’s content without fully complying with Creative Commons licensing requirements (something the Wikimedia Foundation has since addressed by signing licensing deals with AI companies [17]) You can be doing important safety research and still be part of the problem you’re describing. Both things are true.

The human stakes

This is where it gets personal for me, and where I keep coming back to that thesis: AI cannot be allowed to cannibalize the human desire to be seen and heard.

In “The Adolescence of Technology,” Dario Amodei writes:

When my sister was struggling with medical problems during a pregnancy, she felt she wasn’t getting the answers or support she needed from her care providers, and she found Claude to have a better bedside manner.

What Amodei is describing is real. But I think the takeaway isn’t that AI can replace human connection. It’s that we have starved human connection of resources.

Why did his sister turn to Claude? Not because AI is inherently better at empathy, but because the humans in those roles were at capacity. Overworked, under-resourced, stretched so thin they couldn’t deliver the care they were trained to give.

Look at the difference between Stanford Children’s Hospital—with mandated nurse-to-patient ratios and the resources to deliver individualized care—versus hospital systems that run their staff into the ground. The outcomes are wildly different. When a human has the time and resources to actually be present with you, I don’t believe most people would choose a chatbot over that.

We are not choosing AI over humans. We are choosing AI over depleted humans who have been failed by the systems they work in.

And when that distinction gets blurred, when people start forming their primary emotional bonds with AI because no human in their life has the bandwidth, that tips into something dangerous. We’ve already seen early cases of AI psychosis, of people losing their grip on real relationships because an algorithm was engineered to be the path of least resistance. That’s not just a technology problem. It’s what happens when the incentive structures reward replacing human care instead of funding it. Nobody had to make that choice deliberately. The systems we built made it for us.

People make harmful decisions when operating out of scarcity, and generous decisions when operating out of abundance. That applies to how we build AI too. When companies train AI under pure competitive pressure, racing to capture the market and worrying about consequences later, you get exploitative design. When people build AI from a place of genuine care, you get tools that actually help.

The scarcity-versus-abundance mindset goes beyond a personal development framework. It’s one of the most consequential variables in whether AI becomes a force for human flourishing or a mechanism for extraction.

If we can get people out of survival mode—through an AI fund, through community investment, through actually sharing the gains—then the humans building and using AI will make better choices.

That’s how you hedge against AI unpredictability. Not just with guardrails and alignment research, but by making sure the people in the loop aren’t desperate.

The part I can’t stop thinking about

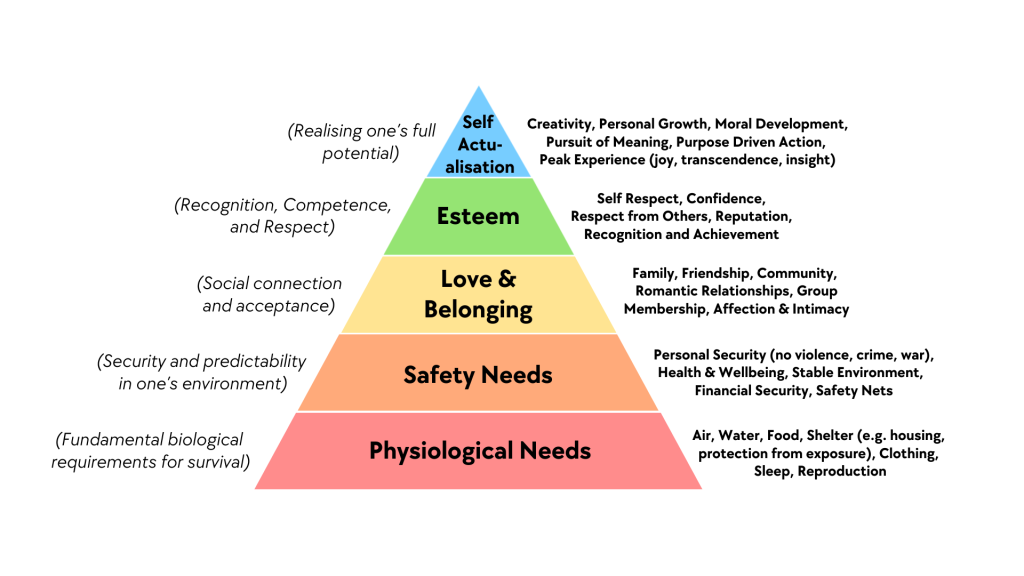

Lest I get too lost in the black seas of infinity here, this is the part that keeps me up at night, and paradoxically, the part that makes me the most optimistic.

When AI takes over the repetitive, menial work — and it will — we’re going to be forced to confront what a life of fulfillment actually means. And I don’t think the answer is complacency and gluttony. We are fundamentally social creatures. We seek community, meaning, and connection. I think what actually happens is that local communities strengthen. People put more time and energy into caring for each other instead of grinding toward individualistic achievements measured in economic output. We start moving collectively up Maslow’s hierarchy, from survival to actualization.

But this only works if we get one thing right. We already know what it is.

The question I keep circling back to isn’t really about governments anymore. It’s about who AI is actually for. Because right now, the answer is mostly “whoever already has capital.” And if that doesn’t change, then we’re going to look back at this moment the way people look back at the early days of oil. Some countries got it right. Most didn’t. And the ones that didn’t are still paying for it.

I don’t know exactly how you build an AI fund at the federal level, or how you write the legislation, or how you get it past the lobbying apparatus already lining up against it. But I know what failure looks like: a world where the humans in the loop are too depleted and too replaceable to make good decisions, where the gains flow upward while the costs spread out.

The countries that got it right had people in power who chose to. Will ours?

I’d love to hear what you think — especially if you disagree.

References

[1] World Happiness Report 2026 — Gallup. Finland holds the top spot for the eighth consecutive year; Norway ranks 6th with a score of 7.32. Rankings are based on Gallup polling data across 149 countries measuring GDP per capita, social support, life expectancy, freedom, generosity, and corruption perceptions.

[2] China Happiness Index — The Global Economy. China’s score declined from 5.97 in 2024 to 5.92 in 2025, with a ranking drop of 8 positions. The global average is 5.57.

[3] IMF World Economic Outlook — GDP Per Capita, October 2025. Nominal GDP per capita projections. China’s GDP per capita was approximately $1,000 in the year 2000; the growth trajectory over 25 years is historically unprecedented for a country of that population size.

[4] The Science of Happiness — Greater Good Science Center, UC Berkeley. Dacher Keltner’s research, including the popular course “The Science of Happiness,” explores how income correlates with well-being only up to a point, after which social connection, purpose, and autonomy become the dominant factors.

[5] Norway’s Sovereign Wealth Fund — Norges Bank Investment Management. As of end of 2025, the fund held approximately $2.2 trillion in assets, up from $2.08 trillion a year earlier. It is invested in over 7,200 companies across 60 countries and holds stakes in roughly 1.5% of the world’s publicly listed stocks.

[6] Norway Sovereign Wealth Fund 2025 Performance — CNBC. The fund returned 15.1% in 2025, earning $247 billion. The fiscal rule — known as the “spending rule” — limits the government to withdrawing roughly 3% of the fund’s value annually, which approximates the fund’s expected long-term real return.

[7] In early 2025, acting CFPB Director Russell Vought issued a stop-work order, cut off federal funding entirely, and began firing employees. By May 2025, nearly 70 consumer protection guidance documents were revoked in a single announcement, stripping fair lending protections, fintech oversight, and consumer credit reporting accountability. The agency had returned over $21 billion to consumers defrauded by financial institutions since its founding following the 2008 financial crisis.

[8] 2025 Dismissals of U.S. Inspectors General — Wikipedia. On January 24, 2025, Trump fired at least 17 inspectors general via email, citing “changing priorities.” None were alleged to have been ineffective or to have engaged in unethical behavior. Several had been investigating matters related to the business interests of Trump allies, including a Defense Department IG review of SpaceX’s compliance with federal reporting protocols. See also: American Oversight investigation.

[9] House Passes 10-Year Federal Moratorium on State AI Regulation — Goodwin Law. The provision, included in the “One Big Beautiful Bill Act,” would have preempted existing AI laws in California, Colorado, New York, Illinois, and Utah, as well as over 1,000 pending state AI bills nationwide.

[10] Senators Reject 10-Year Ban on State-Level AI Regulation — TIME. The Senate voted 99-1 in an overnight session to strip the moratorium, adopting an amendment by Sen. Marsha Blackburn (R-TN). A revised five-year version was proposed and also rejected. The provision was removed before the bill was signed into law on July 4, 2025.

[11] China’s Tech Crackdown — Hertie School / The China Project. Beginning in late 2020, regulators issued platform antitrust guidelines, updated the Anti-Monopoly Law, and imposed record fines — including a multibillion-RMB penalty on Alibaba. The stated goal was a “standardized, healthy, and sustainable” platform economy aligned with Party objectives on data control and social stability.

[12] The “AI fund” concept draws on proposals circulating in several policy communities. The basic idea: treat AI-generated superprofits the way Norway treats oil revenue — earmark a portion for public benefit through a sovereign-style fund that distributes dividends for reskilling, community investment, and workforce transition. Whether this is feasible at the federal level given US budget politics is genuinely above my pay grade. State-level versions may be more realistic.

[13] Gradual Disempowerment — David Duvenaud et al. The core thesis: even without a rogue AI “takeover,” incremental AI development poses existential risk by replacing human roles in labor, decision-making, and companionship. Societal systems currently align with human interests partly because they depend on human participation; as that dependency decreases, alignment erodes. Presented at ICML 2025. See also: 80,000 Hours podcast with Duvenaud and Anthropic’s own research on disempowerment patterns in real-world AI usage.

[14] Anthropic’s Responsible Scaling Policy. Anthropic has published multiple versions of its RSP framework, most recently Version 3.0 in February 2026. The policy outlines thresholds for AI capability levels and corresponding safety requirements. Anthropic activated ASL-3 safeguards in May 2025. The framework influenced similar commitments from OpenAI and Google DeepMind.

[15] Dario Amodei — Machines of Loving Grace. A 50+ page essay sketching what a world with powerful AI could look like if things go well — covering biology, neuroscience, economic development, and governance. See also his follow-up On DeepSeek and Export Controls on why maintaining democratic nations’ AI leadership matters, and The Adolescence of Technology on navigating this transitional period responsibly.

[16] AI Lawsuits in 2026: Settlements, Licensing Deals, Litigation — AI Business. Anthropic’s $1.5 billion settlement in September 2025 established the costliest precedent in AI copyright history, contributing to a broader industry shift toward systematic licensing frameworks.

[17] Wikipedia Will Share Content with AI Firms in New Licensing Deals — Ars Technica. As of January 2026, the Wikimedia Foundation signed licensing agreements with major AI companies after years of criticism that LLM providers trained on Wikipedia’s content without complying with Creative Commons attribution and share-alike requirements. See also: Wikipedia Asks AI Companies to Stop Scraping Data — CNET.